Evaluating Economic Development Programs with Limited Data

There is a common saying that an analysis is only as good as the data it’s based on. One of the most challenging aspects of program evaluation is lack of good data. EDR Group (now EBP) has evaluated multiple economic development programs, and we repeatedly find that data on actual outcomes is either missing or incomplete.

Why is this the case? Public agencies that administer economic development programs often lack the resources to collect the information necessary for evaluations. It can also be difficult to compel program participants to provide information. And in some cases, privacy laws prohibit certain forms of data collection.

Despite these challenges, as policymakers recognize the importance of ex-post analysis, there is a push for more evaluation of economic development programs in the United States. So, what are evaluators to do? Evaluators can use surveys and interviews to fill information gaps. Economic modeling and statistical analysis can help draw conclusions about wider benefits and the attribution of benefits. Here, we provide examples where EDR Group applied these techniques.

SURVEYS AND INTERVIEWS

Evaluators commonly use surveys and interviews to collect data directly from program participants. This information can supplement administrative records that contain an insufficient amount of data. The Maine Legislature’s Office of Program Evaluation & Government Accountability (OPEGA) was recently charged with evaluating the state’s New Markets Capital Investment Program, a program modeled after the federal New Markets Tax Credit. In assisting OPEGA, EDR Group realized that program participants were required to provide only limited information on their activities.

To collect the information we needed, we assisted OPEGA staff as they asked businesses how they used the NMTC-induced investment they received; if they were able to attract additional private sector investment; and whether the program allowed them to create or retain local jobs. In a separate set of interviews, OPEGA staff asked Community Development Entities, which act as investment pass-throughs, about the investments they received and how they would have used them had Maine’s NMTC program not existed.

There are two key challenges to address when using surveys and interviews for evaluation: (1) avoiding bias by ensuring that no group is excluded, and (2) maximizing the number of responses.

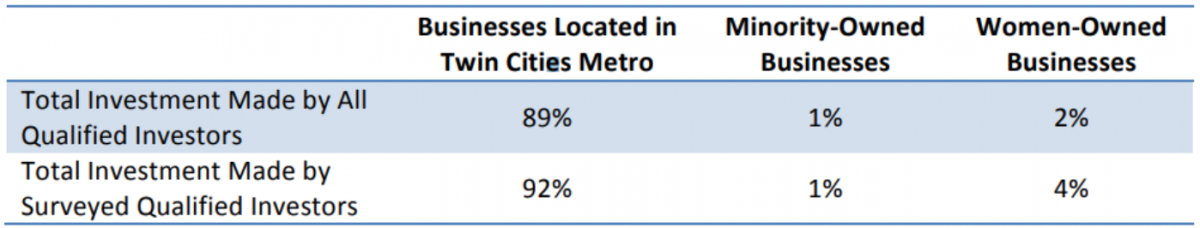

Avoiding bias. When EDR Group evaluated an angel investment tax credit in Minnesota, we sought a representative sample of all program participants, including respondents from both the Twin Cities and Greater Minnesota, and from women- and minority-owned businesses. It is important to address this issue directly by (1) studying the characteristics of program participants; (2) tracking survey responses as they come in; (3) marketing to underrepresented groups to increase response rates; and (4) acknowledging under- or over-representation of respondents or key performance measures in the final survey analysis (see inset table). If response rates are significantly biased, there could be a need to weight the responses of underrepresented groups. Interviewees should also represent the full range of program participants.

Example of how to report the representativeness of your survey. The first row represents the distribution of angel investment made by all investors in the Minnesota program. The second row represents the distribution of angel investment made by the investors who responded to our survey only.

Maximizing response rates. Evaluators use a variety of techniques to maximize their survey response rates and number of interviewees, including offering monetary incentives, sending follow-up emails, and placing phone calls to survey participants. EDR Group has had success with each of these methods. When conducting interviews, it is important to find times that work for interviewees and provide flexibility. This could mean arranging interviews or focus groups outside of regular working hours or arranging web meetings and phone interviews for interviewees who cannot attend in person. When done well, surveys and interviews provide critical information that can be used in other steps of an evaluation.

ECONOMIC MODELING AND STATISTICAL ANALYSIS

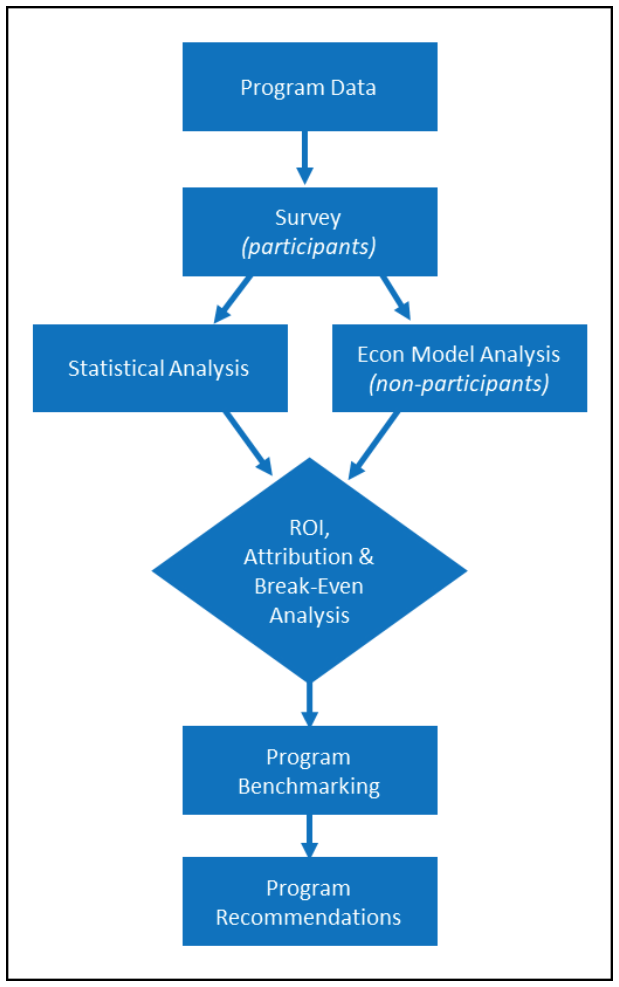

A mixed methods approach to economic development program evaluation.

Public agencies charged with evaluating their economic development programs want to know how program beneficiaries generated economic outcomes. For this reason, evaluators often use economic modeling, quasi-experimental statistical analysis, or a combination of the two. In many cases, program data and surveys are used together with these quantitative techniques. In a review of 16 economic development program evaluations, 6 used economic modeling, 3 used statistical analysis, and 12 used either a survey, interview, or both. Five used a mixed methods approach that included both qualitative and quantitative techniques.1

Evaluators sometimes use information from surveys and interviews as inputs to economic models. For example, in EDR Group’s Minnesota evaluation, direct job and spending impacts were collected using a business survey and used to estimate multiplier effects using REMI, a dynamic economic model. This method captured impacts on program participants and non-participants that benefited indirectly from the state’s program (i.e., other businesses in Minnesota).

Quasi-experimental statistical analysis—which compares program beneficiaries with those not affected by a program—can also benefit from survey and interview findings. For instance, evaluators can survey participating businesses about specific characteristics to identify a “control group” of similar businesses that did not participate. Also, surveys and interviews can improve the soundness of statistical analyses by providing data that may be missing from program datasets.

A COMBINED APPROACH

The best program evaluations use multiple methods to make the most of limited data while avoiding potential biases (see inset diagram). Each step in the evaluation process is related to the others. Program data informs the design of surveys and interviews, which provide information evaluators can use in statistical analysis and economic modeling. When used together, these methods can help evaluators answer key questions about a program’s return on investment and overall effectiveness.

With analysis findings in hand, evaluators can benchmark program performance and recommend improvements. As states and municipalities come under increased pressure to generate value for society, quality evaluations will only improve the performance of economic development programs.

1) Author’s analysis of evaluations found in the State Tax Incentive Evaluations Database, a resource available through the National Conference of State Legislatures (http://www.ncsl.org/research/fiscal-policy/state-tax-incentive-evaluations-database.aspx), plus several other evaluations the author is familiar with.